When a Top L1’s Credibility Crashes on a Certificate That Won’t Download

When a Top L1’s Credibility Crashes on a Certificate That Won’t Download

As a Web3 developer, I used to give chains like Avalanche the benefit of the doubt—established L1, decent rankings, so at least the basics ought to work. Then I went through their hackathon and hit their official academy system. What I saw was enough to change my mind: a chain that can’t serve a PDF certificate and whose signup page crashes browsers has no business claiming technical authority or a serious developer ecosystem. Dig a little deeper and it’s clear that these surface bugs are symptoms of something worse: entrenched authority, centralization, technical arrogance, and a community that’s learned not to speak up. The “AI jury” idea I’ve been proposing is one concrete way to cut through that.

Disclaimer: This piece reflects my own views and experience. The certificate download failure, browser crashes during hackathon registration, and Telegram ban are things I personally experienced (I have screenshots for the registration crashes). Other facts and figures are cited from public news or official documentation where possible.

Certificates and the Academy: From Onboarding to “Internal Server Error”

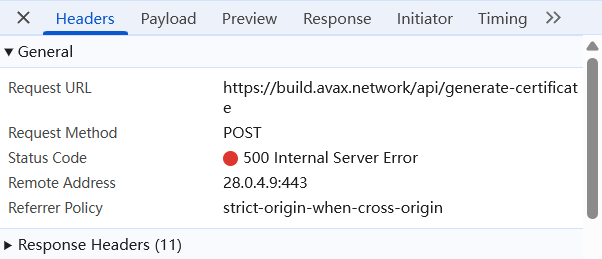

Start with the official academy certificate. The flow lives at: build.avax.network/academy/avalanche-l1/avalanche-fundamentals/get-certificate. It’s Avalanche’s entry-level track—snowman consensus, multi-chain design, custom VMs, five core modules, plus 21 quizzes to unlock the certificate. The design looks serious. The problem is the last step: many developers finish the course, pass every quiz, click “Download certificate,” and get nothing but an internal server error.

A static PDF download failing with a backend error is hard to excuse. This isn’t some third-party site; it’s Avalanche’s own developer academy, aimed at developers worldwide. If they can’t get the simplest, lowest-friction feature right, it’s fair to question how seriously the team takes developer experience. That’s just one example of a broader pattern—a chain that went mainnet in 2020 and rode snowman consensus into the top tier is now stuck in an authority trap it shows no sign of escaping.

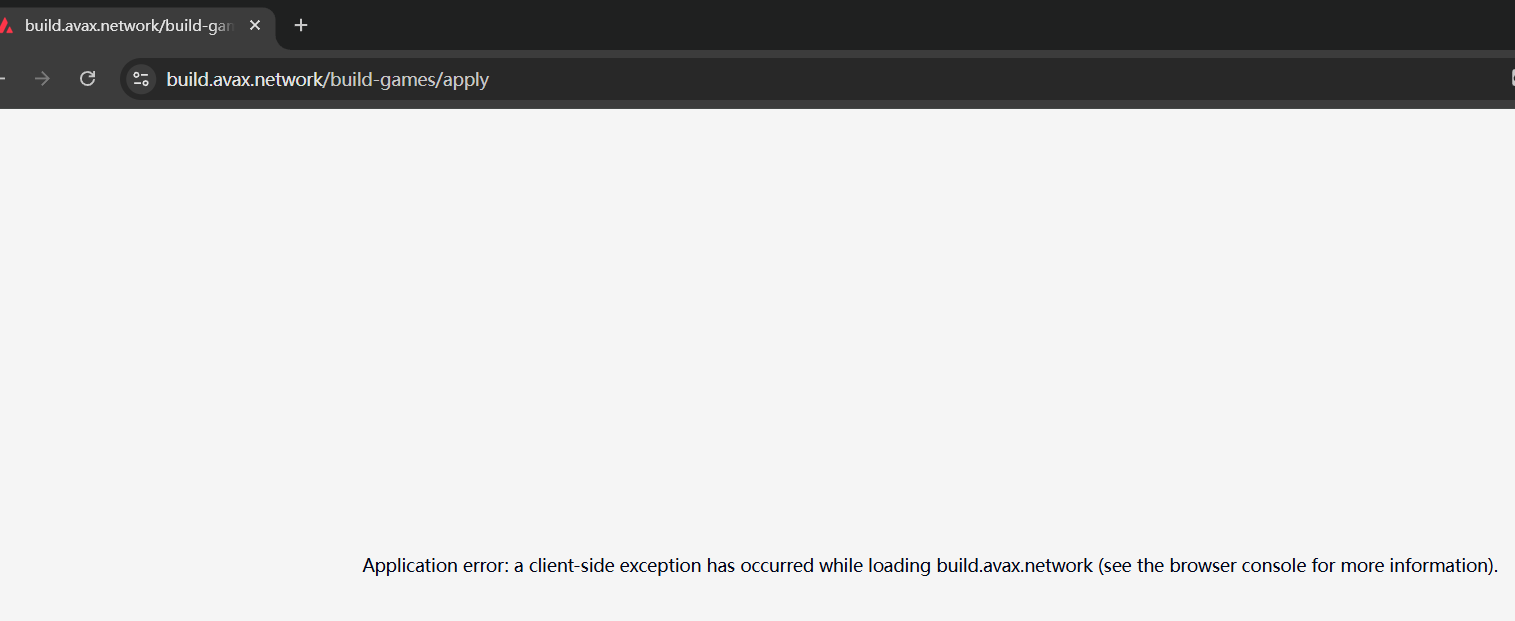

Hackathon Signup and Review: Broken Onboarding, Broken Judging

Things didn’t get better at the hackathon. On the same official stack, I hit browser crashes repeatedly while filling out the form and uploading materials; it took several attempts to submit, and I kept screenshots. Other developers have reported similar issues. Hackathons are supposed to be a main channel for attracting good builders. When the first step—signing up—is this bad, even experienced devs are put off. The common complaints are familiar: messy docs, flaky APIs, buggy tooling. At the end of the day it’s not that the tech can’t be done right; it’s that ordinary developers aren’t the priority. Effort goes into protecting the institution and its narrative.

The contrast is stark. On one side you have basic features failing—certificates, signup, and a serious track record: in March 2023, the C-Chain stopped producing blocks for over an hour due to a node software bug (CoinDesk, CryptoSlate); exchanges had to pause deposits and withdrawals. That’s not a minor glitch—it’s a core infra failure. The Avalanche bridge is maintained by only four Wardens, a clear single point of failure; the ecosystem has seen repeated contract exploits, including Platypus Finance losing ~$8.5M to a flash-loan attack (over 2.2M AVAX at the time), which speaks to slow and inadequate security and response.

On the other side, the same chain runs a hackathon that collects 2,000+ projects and touts “vibrant ecosystem.” Do the math. A team spends ten days building an MVP; giving each project ten minutes—reading the logic, checking the demo—is the least one could ask. For 2,000 projects that’s 20,000 minutes. Even with five judges working nonstop, that’s over a week of full-time review.

In practice, review is wrapped up in a few days. Worse: if you don’t make the cut, you get no explanation and no feedback. That creates a perverse incentive. As a judge, you’re paid the same whether you review carefully or skim. Nobody can verify that you actually opened the repo or ran the code. With no accountability and no transparency, the rational choice is to cut corners—reject batches of projects to lighten the load.

That’s what makes it so dispiriting for builders. The process is a black box. You think you’re being judged on code; you’re really being judged on a few seconds of attention. The “AI jury” I propose is meant to end that: an AI’s “pay” is compute; it can scan all 2,000 projects around the clock and give every rejected team a concrete, quantified report. That’s the kind of transparency Web3 should aim for—not letting weeks of work die in a judge’s rational laziness.

Governance and Centralization: Authority Lock-in and Board Exodus

Underlying all of this is centralization. Avalanche is effectively held hostage by a single authority. Ava Labs controls the core codebase, the foundation, and ecosystem resources. On-chain governance is barely meaningful; independent developers and teams with different technical views are easily sidelined or pushed out when they challenge that control. Mainnet validators must stake at least 2,000 AVAX (on the order of tens of thousands of dollars), so nodes are dominated by large players and ordinary devs have little say. In that setup, authority ossifies and innovation suffocates.

Even the foundation’s own board had enough. In early March 2025, three Avalanche Foundation directors resigned (reported by Cryptopolitan and others). Their departure laid bare the dynamic: they had tried to push for more diversity and transparency and to listen to the community, but every initiative was blocked by Ava Labs. Power was concentrated elsewhere; the foundation acted as a puppet. Ideological clash and structural impotence led to a collective exit—proof that Avalanche’s governance is stuck in “power at the center, infighting and waste,” and that people who actually want to improve the ecosystem end up leaving.

Getting Banned and the Emperor’s New Clothes: When Truth Becomes “Prohibited”

I couldn’t help commenting in a group: “At this review pace, I could ‘read’ a whole book in three seconds.” I didn’t attack anyone; I stated a fact. My Telegram account was banned. That’s the pattern: when someone points out slapdash judging, broken sites, or inflated hype, the response isn’t to fix the problem—it’s to remove the person who raised it.

What I didn’t expect was how that ban became a signal. After it happened, many participants reached out in private and agreed. They said this skepticism was already widespread; they just didn’t dare challenge Ava Labs’ narrative in public.

It gets worse. Other builders told me their projects had full MVPs and live sites, yet were rejected with reasons that were barely reasons—e.g. “too many applicants, we had to cut some.” When people asked for details in group chats, the organizers went silent.

That’s why I built the AI jury. Some of the teams that were “randomly cut” have already started submitting to my AI review system. It shows that people see through the performance: a big-name L1 talking about ecosystem, but unwilling to fix or even acknowledge broken review logic. When developers start seeking objective AI feedback instead of a human judge’s few seconds of attention, the kind of credibility that depends on centralized control and narrative control has already collapsed.

How Avalanche Stacks Up: Monad, Conflux, and the Numbers

In Web3, what ultimately matters is technology and honesty—code and experience, not seniority or marketing. A chain that can’t deliver a certificate, crashes browsers on signup, and has a history of core outages and highly concentrated power may still call itself a “top L1” and attract 2,000+ hackathon projects, but that’s a hollow shell.

They also keep missing a simple point: the first step toward improvement is admitting shortcomings; the heart of an ecosystem is respecting developers. Kicking out people who tell the truth, hiding bugs, and hoarding power leads to the same place as so many hyped but poorly run projects—burn through trust until there’s nothing left.

I’ve seen the opposite in Polkadot. I’m not saying their tech is perfect, but as a developer I feel treated like a human. There, feedback is used to improve; on Avalanche, feedback is used to ban. Polkadot doesn’t silence or deplatform people who point out problems; they listen, explain, and actually iterate. That’s the kind of posture that keeps developers and sustains an ecosystem.

On raw technical comparison, the public data on Avalanche vs. Monad and Conflux is telling:

| Dimension | Avalanche | Monad / Conflux | Takeaway |

|---|---|---|---|

| Execution | Serial / pseudo-parallel (three chains async, but each chain serial) | Optimistic parallelism (Monad) / Tree-graph concurrency (Conflux) | Generational gap |

| Measured TPS | 100–300 (high latency, 3–5 s peaks) | Monad 8000+ / Conflux 10000+ | Orders of magnitude apart |

| Governance | Institutional control, bans, infighting | Monad: tech-led / Conflux: community-driven | Innovation vs. ossification |

| Developer experience | Certificate fails, signup crashes, messy docs | Performant tooling, clear docs, responsive support | Attitude shows |

TPS and related figures from public claims or third-party tests; see references for context and dates.

In short, Avalanche’s performance story belongs to an earlier generation of “fast” chains; the architecture has inherent limits and evolution is closed off, so theory and practice diverge. Monad’s optimistic parallelism and Conflux’s tree-graph design deliver a different class of throughput—and both chains keep engaging with the community and improving the experience.

Avalanche is in an awkward spot: it doesn’t match the technical energy of newer chains like Monad and Conflux, it doesn’t deliver the rigor and accountability you’d expect from an old guard, and it doesn’t offer the openness of something like Polkadot. The “top L1” label has become a matter of optics, not substance.

Conclusion: Choose Chains by Tech, and by Attitude

For developers, choosing a chain means looking at both technical strength and how the team operates. Monad and Conflux demonstrate what’s possible with real performance and iteration; Polkadot shows that treating builders with respect pays off. Avalanche, by contrast, has been eroding trust through arrogance and half-hearted execution.

The AI jury isn’t a plug for a product—it’s a response to institutional indifference. The goal is to puncture inflated narratives, put code back at the center, and let merit matter more than connections, so that developers who put in the work can be seen and taken seriously.

A top chain’s credibility isn’t handed down by title or hype. It’s built from technology, experience, and respect. In Web3 there are no permanent “leaders,” only continuous innovation. Chains that cling to the past, hide problems, and silence critics will be left behind.

References

- Avalanche C-Chain / X-Chain outage (March 2023): CoinDesk, CryptoSlate

- Avalanche bridge and four Wardens: LI.FI analysis

- Platypus Finance flash-loan attack (~$8.5M): Cointelegraph

- Avalanche validator minimum stake (2,000 AVAX): Avalanche docs

- Avalanche Foundation board resignations (March 2025): Cryptopolitan

- Conflux tree-graph 3.0 and TPS (e.g. ~15k TPS): Conflux docs; Monad testnet and performance: Monad